The Neomonolith

October 2015

Monolith or Microservices?

In the modern silicon-valley startup zeitgeist, the CTO of a formative software company faces a pivotal decision. Will the fledgeling software service be a monolith or microservices? The stakes are high: rewrites are measured in man-years.

There is no industry consensus on whether either approach is strictly-better. Despite that ambivalence, there is general agreement of two points:

- Monoliths that exceed some threshold will be broken apart eventually. At a large enough scale, only a service-oriented design is workable.

- There are only two options. It's either service-oriented or a monolith, and you must choose.

The Problem

Much has been written contrasting the pros and cons of monolith and microservice designs in a general context. 1 This territory is well-covered so I omit discussion of it here. Instead we will take a more narrow focus on the initial stage of the business. The neomonolith is most suited to this formative period: when scale is small and code changes land at a breakneck pace.

You can begin with a monolith. Sometimes this is called a sacrificial architecture because of the inevitable transition to services at large scale. It will enable immediate, rapid progress but inevitably ossify your codebase in a Kafkaesque entanglement of broken interfaces. That may be a reasonable tradeoff: technical debt is irrelevant if the business fails.

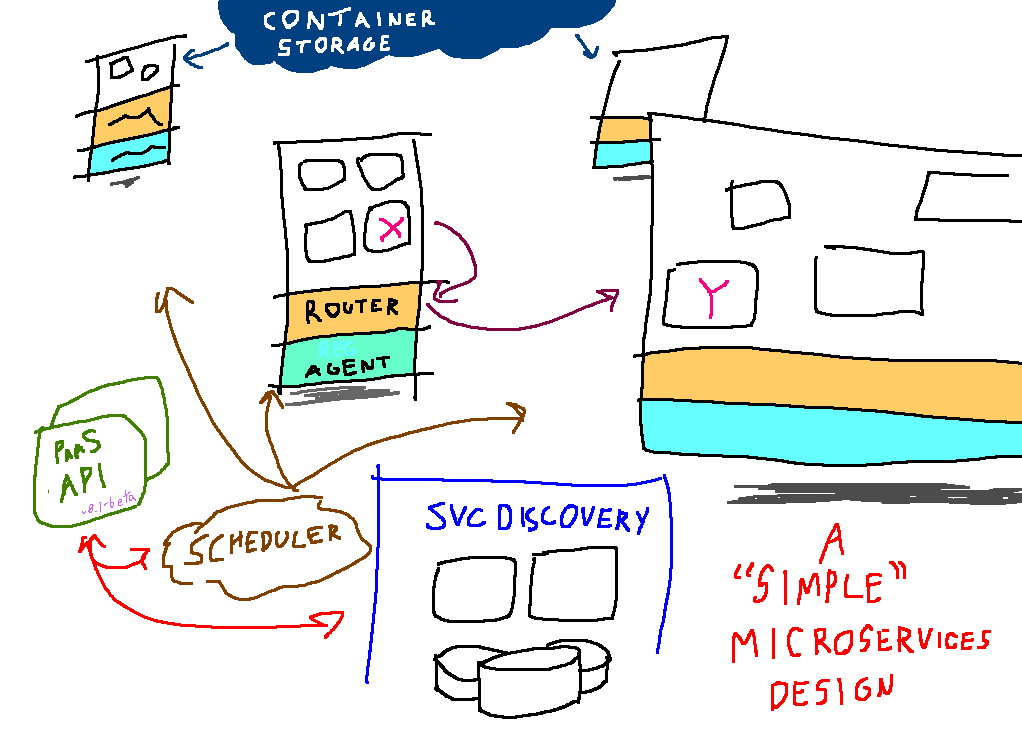

Alternatively, you can construct a microservices architecture from the get-go. Such a design comes with a steep premium. You must stand up and operate new pieces infrastructure, most of which is non-standardized, alpha-quality and changing rapidly. In addition, with a monolith, the work of monitoring, alerting, configuration, and a local development is paid once. But with a microservice design, that cost must be paid for every service. Starting with microservices is such a risky proposition for a startup that many proponents of the design recommend building monolith-first.

A False Architectural Dichotomy

This architectural question is a false dichotomy: there is an intermediate, hybrid option. I call it the The Neomonolith. The neomonolith is a superior design for the nascent systems built by startups. It combines strengths of both approaches with a less onerous set of shortcomings. I've had great success applying this pattern building both ngrok.com and equinox.io.

The ideas described herein are not new. Even this repackaging is not genuinely novel. Other efforts have promoted similar architectural designs, but most are couched in the context of a particular language or framework. 2 The neomonolith pattern is not limited to a particular language or framework – indeed, one of its strengths is the tolerance of heterogeneity in implementation languages!

What is the Neomonolith?

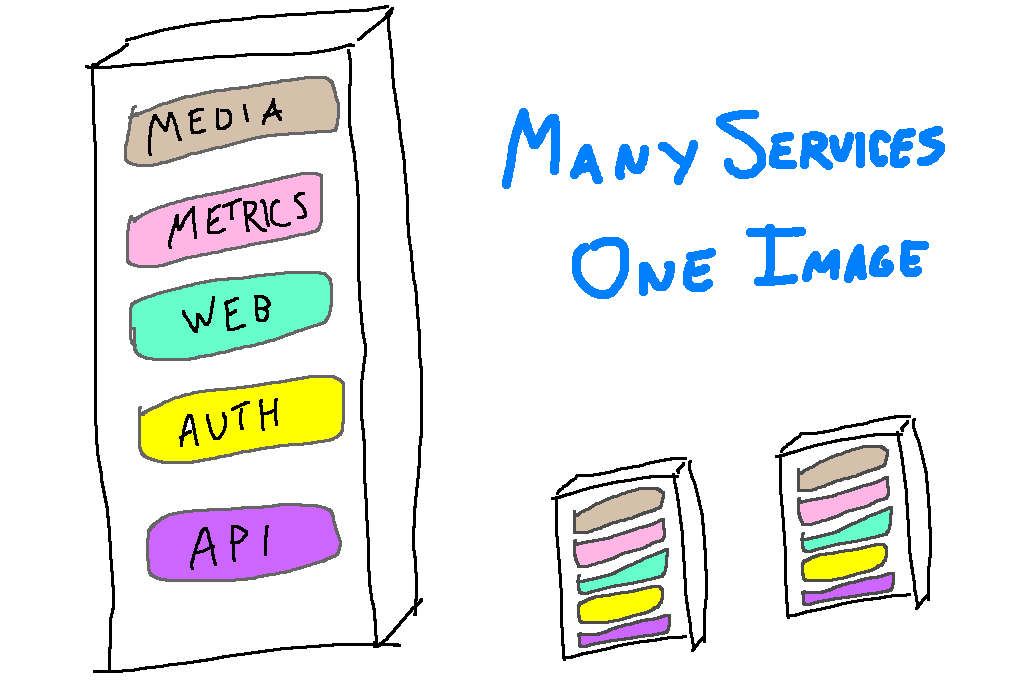

A neomonolith can be conceptualized as a special-case deployment of a microservice architecture.

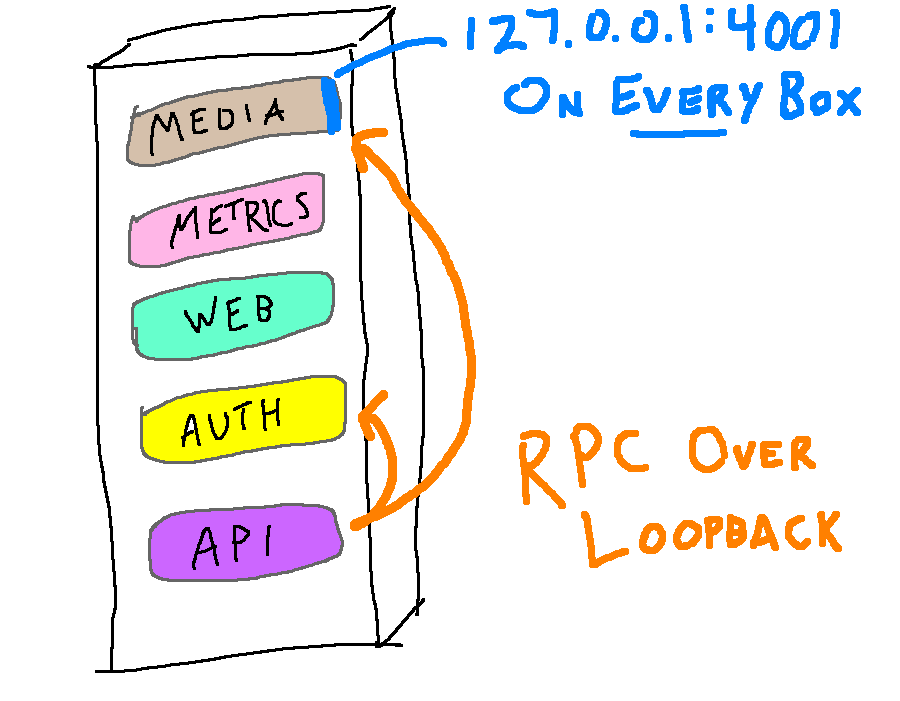

Like a microservices architecture, a neomonolith is composed of small, independent services communicating with well-defined RPC interfaces. But like a monolith, each machine runs the same set of code. Each server is an identical image that runs all of the individual services. All services start when a host boots and stop when it shuts down.

Services communicate with each other over ports bound on the loopback interface. A service listens on the same port regardless of which host it runs on, obviating the need for any service discovery system.

Containers are optional. They can be helpful if you are struggling with resource contention between misbehaving services, but they are easy to add later if you are not prepared to pay their operational cost up front.

The Best of Both Worlds

The neomonolith provides the best of both worlds, combining benefits from both monolith and microservice styles:

Microservice benefits

- Independence of development - services can be developed independently, even in different code repositories

- Independence of deployment - services can be deployed without affecting others

- Independence of failure - e.g. a file descriptor leak in one process will not affect others on the same host

- Enforced interfaces and the single responsibility principle - services are small and must communicate over well-defined interfaces

- Heterogeneity of language/frameworks - services communicate over APIs, allowing teams to "use the right tool for the job" if that would be more productive

Monolith Benefits

- No orchestration complexity - all boxes run an identical set of code and processes

- No dynamic service scheduling - services start on boot, halt at shutdown

- No service discovery - services listen on the same port on all hosts

- Easy scaling - capacity can be added by deploying additional machines with the same image behind a load balancer

- Defer distributed systems problems - use of loopback enables you to defer robust handling of uncommon failures caused by unreliable networks 3

Three of the monolith benefits listed begin with the word 'No'. Anyone who has operated large-scale systems understands why. Every additional piece of infrastructure you run is an operational liability; each adds another point of failure. Institutional knowledge and software must be built to configure, monitor and alert on these new pieces.

But beyond that, the infrastructure of a typical microservice PaaS deployment bears yet another oft-ignored cost. Complicating the production infrastructure makes a software system harder to reason about. It becomes more difficult to build a mental model of how the entire system operates. This makes production incidents harder to debug: "Is it our code, or a problem with the PaaS?" It also increases the knowledge and tools required for development, ratcheting up the barrier to entry for new programmers.

A neomonolith dispenses with all of that complexity, greatly simplifying the software system. Problems that still plague microservice PaaS installations are often non-issues. Deployment requires only tried-and-tested tools familiar to anyone who has done ops in the last 10 years.

Drawbacks

- No scaling independence - you must scale out all services to meet the demand of the most resource-intensive one

- Requires investment in operational tooling - monitoring, alerting, and local dev must be repeated or reusable for each service

- Refactoring across RPC interfaces - it's still more difficult than refactoring over a monolith's functions

- Limited failure isolation - if a service dies, its consumers can't talk to another one running on a different host

A neomonolith is not a panacea. It shares faults of both monoliths and service-oriented systems. It is more effort than starting monolith-first and it will not scale out forever like service-oriented systems in place at Amazon or Google. On the other hand, most of us don't run at that scale. It's important to remember that not running tens of thousands of machines is an advantage that you should leverage while it lasts.

Transitioning to a complete microservice design

Once the tools improve and mature, that there will be no question about whether you begin with a sacrificial monolith first. But right now, it is not the future yet.

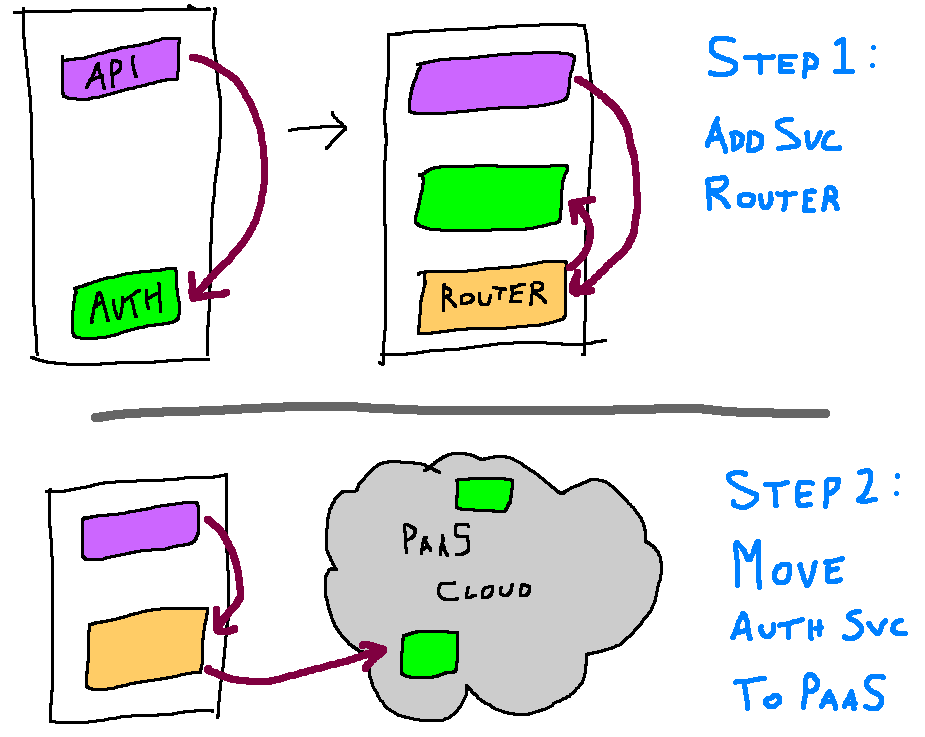

The single most important benefit of a neomonolith is the clear path to a full microservices PaaS deployment. The up-front investment in defining RPC interfaces and scoping out services means that when you're ready to deploy on a Mesos/CoreOS/Kubernetes cluster, it will require no major architectural changes. Switching to service discovery or cluster proxies instead of using loopback is a task measured in weeks, not years. Moreover, PaaS elements can be added piecemeal for a smooth transition. For example, sketching the first two steps of a hypothetical switch:

In short, not only does starting with a neomonolith today provide you with a whole host of development benefits, but it additionally positions your engineering team for a painless transition in the future if you must scale up to a more traditional service-oriented design.

Video

I gave a lightning talk at GopherCon 2015 that is the spiritual precursor to this piece in which I called this design 'The Lego Monolith'.

Footnotes

- Microservice/Monolith tradeoffs

- Prior Art

- Yes, loopback can fail when you overflow the kernel buffers, although I've yet to observe this ever happen in production. Whether you choose to defer error handling and retries for network problems depends of course on your failure tolerance for that particular operation.